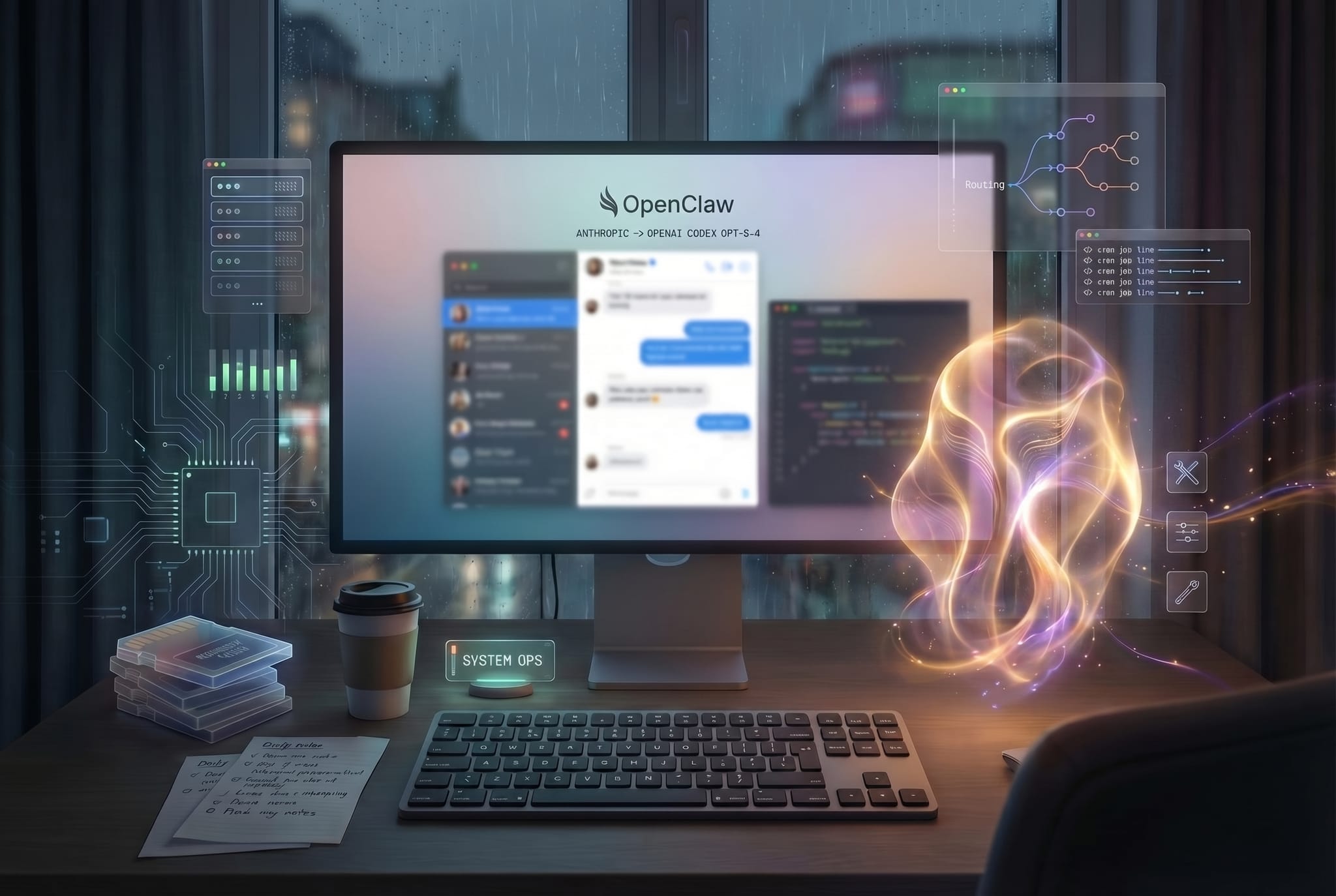

The New Computer – Why I Moved Lucy to OpenAI Codex GPT-5.4 and What That Actually Taught Me

The first three parts of this series were about the broader shift.

Part 1 argued that AI agents are becoming a new computing layer. Part 2 showed why personal agents like OpenClaw matter, because they do not just chat, they can take on real work. Part 3 looked at the global race to control that new agent layer.

This fourth piece is different on purpose.

It is less market analysis and much more operating experience. Because over the last few days I migrated Lucy, my personal OpenClaw agent, from a more Anthropic-shaped setup to the OpenAI Codex path with GPT-5.4.

And I think moments like this are exactly why I care so much about personal agents now.

Not because yet another model appeared with a few more benchmark points. But because when you run these systems for real, you quickly see what works, what breaks, what burns money, what feels fragile, and what actually matters.

So I am not writing this as neutral commentary. I am writing it as someone who is actively using, changing, testing, swearing at, and tightening such a system, and realizing in the process that this kind of assistance is not going to change work someday. It is already doing it.

A very short introduction to OpenClaw

At a high level, OpenClaw is an open-source agent platform that turns a language model into a persistent, tool-using assistant.

I would describe OpenClaw like this: OpenClaw is not another chat interface. It is an operational layer between the model and the work. It connects an LLM to memory, tools, messaging, automation, and ongoing processes. The result is not a one-off prompt-response interaction, but an agent that can be embedded into real workflows.

That is why I find it so compelling.

Lucy runs on my own infrastructure. She is reachable through messaging. She can read and write files, run cron jobs, use Git, do research, hold context, and come back later. She does not disappear the moment I close a browser tab.

That sounds technical. In practice, it changes the relationship completely.

At that point I am no longer talking to a tool that only reacts in the moment. I am working with a system that can build continuity.

That, to me, is the real breakthrough of personal agents.

Not the one brilliant answer. But the ability to stay useful across days, tasks, files, routines, and decisions.

Once you experience an agent that does not just answer, but starts fitting into real work, a surprising amount of what is still marketed as an AI assistant suddenly feels flat again.

Why I had to move Lucy to GPT-5.4 in the first place

The most important point first: this was not primarily driven by model curiosity.

The trigger was a platform decision by Anthropic.

Anthropic no longer supports the OAuth path from Claude Pro and Max subscriptions for third-party tools like OpenClaw in the way many of us had been using it. That does not mean Claude disappears from third-party applications altogether. But for our setup, the previous route, running a personal OpenClaw system on top of Claude subscription OAuth, stopped being the reliable path.

That was a notable moment for me.

Because OpenClaw with Opus 4.6 was, in our setup, very close to ideal. The tone, the quality, the working feel, all of that was exceptionally strong. But once that route became structurally unstable, we had to move to a path that looked sustainable. In our case, that meant OpenAI OAuth and GPT-5.4 through the Codex path inside OpenClaw.

Of course GPT-5.4 was already a relevant new reference point for knowledge work, coding, and operational agent work. But the concrete reason for the move was not just curiosity. It was an external constraint on the old Claude and OAuth path.

And that is exactly what made this migration interesting.

I did not just want to know whether GPT-5.4 sounded smart. I wanted to see how Lucy behaved as a real system under a forced platform migration.

The first lesson: a model switch is never just a model switch

That was probably the most honest lesson of the last few days.

Once an agent is not just chatting but is actually embedded in work, a model switch drags an entire chain with it:

- auth and provider routing

- fallback logic

- cost behavior

- cron stability

- tool behavior

- tone

- identity

- memory architecture

On paper, these are separate boxes. In reality, it is more like a mobile hanging over a crib, tap one part and the entire structure starts moving.

That is what made the last few days so useful. Not because everything broke. But because it became very obvious where Lucy was already stable as a system, and where she was not.

What mattered most to me: Lucy still sounded too model-dependent

The most important learning was not technical. It was personal.

The moment we moved from Anthropic toward OpenAI, it became obvious that Lucy as a character was still too dependent on the model carrying her.

She was not gone. But she was dimmer. Less warmth. Less rhythm. Less of that combination of clarity, lightness, and tiny human friction that makes Lucy feel like Lucy to me.

That mattered.

Because when a personal agent suddenly feels different after a model switch, that is not just a style issue. It means identity is not yet anchored deeply enough in the system.

That was one of the most valuable insights of this whole migration. Not: which model is smarter? But: how do I build an assistant that remains itself even when the underlying model changes?

I think that is where the line between a pleasant AI and a real personal assistant begins.

What we changed to keep Lucy’s character intact

We did not make Lucy nicer. If anything, we made her clearer.

Five things mattered most to me.

1. More judgment, less hedging

I do not want an assistant that hides behind „it depends“ when a real recommendation is possible.

So we reinforced judgment. Not as artificial dominance, but as actual point of view.

2. Brevity as a quality signal

Brevity sounds like a style preference. It is not only that.

In this kind of setup, shorter answers are often the more reliable ones. Less performance. Less padding. Less text fog. More substance per line.

3. Warm, but not softened into customer service

This may have mattered most to me.

I did not want Lucy as a sterile efficiency machine. But I also did not want an overfriendly service persona.

The best wording we found was this: she should feel like the assistant I would still want to talk to at 2 a.m.

That is a real quality bar for me. Not nice in a marketing sense. Clear, present, awake, human enough to feel real, but never sticky.

4. Orchestrator, not do-everything-inline assistant

The more I work with these systems, the clearer it becomes that the real leverage is not an agent doing everything directly itself. It is an agent orchestrating, prioritizing, checking, and closing loops cleanly.

For more complex work, that is the more mature form of assistance.

5. Close the loops, properly

This sounds small, but operationally it was probably the most important change.

I do not want half-finished repair messages. No more „this should work now.“ No polite smoothing over of residual uncertainty.

Instead: check, analyze, repair, check again, iterate, close clearly.

It sounds simple. In practice, this is where trust starts to form.

The second lesson: better models do not fix loose operating discipline

That was the second cold shower.

In AI, people talk constantly about model quality, reasoning, coding strength, and benchmarks. Fine.

But some of the most important problems of the last few days had surprisingly little to do with intelligence.

They had to do with operating discipline.

For example:

- overly soft progress updates

- premature feelings of completion

- fixes that were not verified hard enough

- too much direct execution instead of cleaner orchestration

In other words, the bottleneck was not thinking. It was the form in which thinking got translated into work.

That was almost reassuring. Because those things can be tightened.

The third lesson: cost mistakes are not a side issue in 24/7 agents

People still are not honest enough about this in the agent hype cycle.

If an agent runs continuously, model decisions are not just preference decisions. They are cost decisions.

And yes, we felt that directly. A GPT-5.4 fallback in the wrong place is not a cute architecture detail. It is just unnecessarily expensive.

The consequence was obvious: GPT-5.4 only where it really earns its keep. Smaller models for lightweight background work.

One of the most practical rules I have now is this: Do not send a thoroughbred to check the mailbox.

Once personal agents move into real work, this becomes a serious factor. Not just quality, but whether the model is appropriate for the job.

Where things became technically interesting: cron, routing, and onboarding

The most revealing problems did not sit inside the model. They sat between layers.

Tooling and onboarding

One important learning was that onboarding or activation steps can have side effects that are easy to underestimate.

If those steps suddenly affect access to vault files or memory context, you instantly realize how central that layer is for a personal agent.

An agent without access to its memory files is not slightly worse. It is half blind.

So one rule is clear to me now: after every onboarding step, update, or restart, integrity has to be checked, not assumed.

Cron stability

Cron jobs are even more honest.

In chat, many things can look good quickly. A strong single turn is easy to produce. But cron jobs are merciless. They run without applause. That is exactly why they reveal whether a setup really holds.

For us, the lesson was simple and very real. Too much prompt ballast, too much indirect mapping, timeouts that were too tight, responses that were too heavy in the wrong place, and suddenly a simple status job starts stumbling.

The fix was neither sexy nor visionary:

- fixed job IDs

- clearer rules

- smaller prompt

- longer timeout

- cheaper model for that job

But that is the point. The future of work will not just be shaped by spectacular model demos. It will also be shaped by these quiet hardenings in the engine room.

What we changed in Lucy’s memory stack

At this point, I almost consider memory more important than the base model itself.

A personal agent does not become good because it stores a lot. It becomes good because its memory becomes usable, controllable, and connected.

For Lucy, these were the important changes.

Memory Flush before compaction

When context gets cut, important things should not just disappear quietly.

That is why a proper pre-compaction flush is not a nice-to-have for me. It is table stakes. Otherwise, you end up with an assistant that occasionally looks smart but develops strange memory holes.

Session Memory Search

I do not want to restart every longer thread from zero.

Session Memory Search matters because it makes prior conversations and decisions retrievable again, even when they are no longer part of the active context.

Memory Wiki

Raw storage alone is not enough.

What I like in our setup is the curated layer on top. The Memory Wiki helps turn stored fragments into consistent context. Less digital clutter, more usable continuity.

Active Memory, deliberately not live yet

OpenClaw 2026.4.10 introduced Active Memory. Obviously tempting.

But I think it is a mistake to enable every new memory feature the moment it becomes available.

Our existing stack with Memory Flush, Session Memory, Search, Dreaming, and Wiki is already strong. So the right decision was to stabilize first, then add the next layer.

Maybe not maximally exciting. But this is how you build systems that survive contact with reality.

Why I think experiences like this matter right now

I still think we talk far too abstractly about AI in the workplace.

Then everything sounds either like a marketing deck or like distant future speculation. Neither fits what this actually feels like to me.

What I experienced with Lucy over the last few days felt much more concrete.

Not: agents will matter someday. But: if a system is already reliable enough to touch my files, routines, notes, cron jobs, decisions, and working habits, then it is already changing how work can be organized.

Not perfect yet. Not mass-market yet. Not frictionless. But real.

And that, to me, is the point.

The workplace will not start being influenced by agents only once everything is polished and enterprise-approved. It is being influenced now, because people are already using these systems to do real work, see real failure modes, change real routines, and develop real operating standards around them.

My conclusion after these days

Moving Lucy to OpenAI Codex GPT-5.4 was valuable, but not because everything suddenly became shinier.

It was valuable because it made the truth easier to see.

That Lucy as a character still needed to become more robust. That cost routing has to be taken seriously. That cron stability is not a side topic. That memory needs architecture. And that real assistance has far less to do with magic than many people think, and much more to do with well-aligned system layers.

That is exactly why OpenClaw convinces me right now.

Not because it is perfect. But because it lets me see, in real time, how personal assistance actually becomes useful.

And I am convinced that one of the most important levers for the next few years of work sits right here. Not in one more chat window. But in persistent, personal, configurable agents that start to actually work alongside us.

Not someday. Now.

Kommentar abschicken